Today’s top AI Highlights:

First Large Visual Memory Model gives AI visual memory

Create multiple sub-agents in Claude Code

Apple's FastVLM: High-Resolution Vision AI That's Actually Fast

Lovable can now think, plan, and act like a senior engineer

Read time: 3 mins

AI today excels at conversation, but struggles with video like someone learning a new language.

Current LLMs such as Gemini hit their limit around one hour of video processing before producing significant hallucinations, while humans naturally retain decades of visual memories with remarkable clarity.

Memories.ai has introduced the world's first Large Visual Memory Model, engineered to provide AI with human-level visual memory. Created by a former Meta researcher, this system compresses, indexes, and retrieves video content across essentially unlimited durations.

The approach mimics natural human memory through specialized components for query handling, retrieval operations, selection processes, and reconstruction, allowing AI to perceive and remember visually like humans.

Key Features:

Boundless context handling - Unlike Gemini's 1-hour video limit, this model processes virtually unlimited video lengths with minimal hallucination rates, successfully analyzing 10+ hours of content including complete TV series.

Biology-inspired design - Features specialized models that replicate human memory functions like cue recognition, broad retrieval, detailed extraction, and memory synthesis for intuitive visual comprehension.

Production deployment - Already in use by security firms for threat monitoring, media organizations for content management, and marketing teams for trend tracking and influencer discovery.

Benchmark performance - Achieves state-of-the-art results across major video understanding benchmarks, including categorization, search, and Q&A with precise timing down to milliseconds.

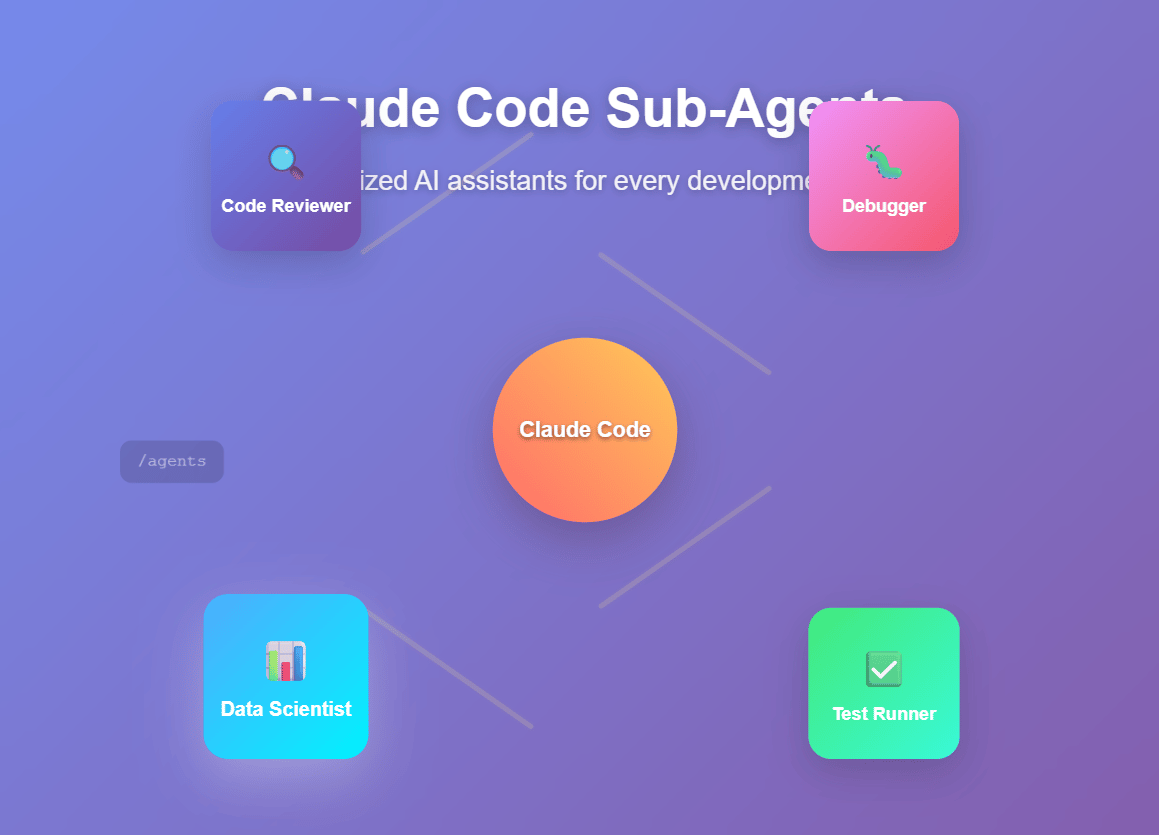

Claude Code just introduced a game-changing feature that transforms how developers work with AI assistance - specialized sub-agents.

Instead of using one generic AI for everything, you can now create focused AI specialists that handle specific tasks with their own context windows and tool permissions. Think of it as building your own team of AI experts.

What makes this powerful:

Each sub-agent operates independently with its own expertise area - a code reviewer that automatically checks your commits, a debugger that jumps in when tests fail, or a data scientist that handles your SQL queries. They work in separate contexts, keeping your main conversation clean while delivering specialized results.

Getting started is simple: Run /agents in Claude Code, describe what you need (or let Claude generate it for you), select the tools it should access, and you're done. Your sub-agent will automatically activate when relevant tasks come up, or you can invoke it explicitly.

Real-world impact: Developers are creating sub-agents for code reviews, test automation, database queries, and debugging. Each one becomes a reusable team asset that maintains consistent workflows across projects.

The feature supports both project-level agents (shared with your team) and personal agents (available across all your projects), with simple Markdown configuration files that integrate seamlessly with version control.

This isn't just about convenience - it's about building persistent AI expertise that grows with your codebase and scales with your team.

Apple researchers just solved a major problem in AI vision models - the tradeoff between accuracy and speed when processing high-resolution images.

Vision Language Models (VLMs) that can see and understand images work much better with high-resolution inputs, but this creates a bottleneck. Higher resolution means longer processing times, both for analyzing the image and generating responses, making them too slow for real-time applications.

The breakthrough: FastVLM uses a hybrid visual encoder architecture specifically designed for high-resolution images. Instead of the traditional approach that slows down dramatically with image quality, FastVLM maintains speed while preserving accuracy.

Real impact: This enables practical applications like accessibility tools, UI navigation, robotics, and gaming that need both visual precision and instant responses. The technology is designed to run on-device for privacy, making it perfect for mobile applications.

Example: When shown a street sign image, low-resolution models might incorrectly identify a "Do Not Enter" sign as a "Bus Stop" sign, while FastVLM with high-resolution input gets it right - without the typical speed penalty.

Apple has released the inference code, model checkpoints, and an iOS/macOS demo app, signaling their commitment to advancing practical AI applications that work in real-world scenarios.

This research, accepted to CVPR 2025, represents a significant step toward making sophisticated visual AI both accurate and responsive enough for everyday use.

It now comes with a new Agent Mode in beta. It can now reason through tasks, break problems down, explore your codebase, and execute with autonomy, slashing build errors by over 90%.

Developer-like workflow: Interprets requests, explores codebases, uncovers missing context, and auto-fixes issues

Real-time capabilities: Live web search for documentation, on-demand image creation, and codebase exploration

Cost structure: Simple requests under 1 credit, complex builds cost more based on actual resource usage

That’s all for today! See you tomorrow with more such AI-filled content.

Don’t forget to share this newsletter on your social channels and tag Wings of AI to support us!